Going Cloud: the 8 don’ts

Okay, let’s face it: the world is finally figuring out that cloud is for everyone, and not just for large-scale enterprises. This is a big step ahead, but when it comes to new adopters there are still many misconceptions and wrong expectations.

Wrong expectations are probably the most common reason for failure, because they usually lead to disasters that leave moving back to a legacy infrastructure design as the only option left.

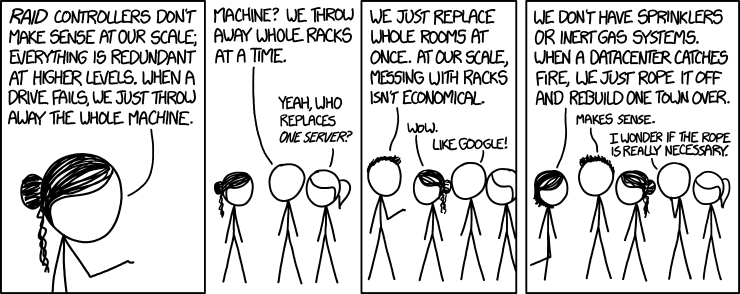

(Image Source: XKCD)

But, turns out, it’s easier than you would expect. There is a basic set of rules and guidelines, and if you follow them you can easily be successful.

Let’s begin in this article with the 8 don’ts:

- Never, ever trust a single instance of a given service. Don’t rely on redundant database platforms, replicated block devices, and so forth. They can still fail: accounting for this kind of failure at the application layer is the way to go.

- Don’t put all your eggs in a bucket: cloud platforms are available in different geographical locations by nature, so you should really leverage this. True geographical redundancy can be hard to achieve at the beginning, but try at least to have read replicas spread over the world, so that in case of downtime in the main region you’re using your service would just be degraded and not completely unreachable.

- Never think small. Some design patterns could seem overkills at first sight, but believe me, they are not. If you focus on designing your service so that it is ready for scaling up when needed, you won’t have to worry about later.

- Don’t design complex software platforms: micro services are the way to go. Keep them simple and easy to maintain. It will be easier to scale them, and not only from a technical point of view: imagine how easy could be handing over not a part of a complex software, but a micro service to a new dedicated development team.

- Never forget that performance is the key: a killer SQL query could still be affordable if you have a small number of users, but is going to be an issue when your platform grows. Make your application as efficient as possible, even when it doesn’t seem needed.

- Don’t forget that everything could break, at any time. Keep your instances as simple as possible, so that they are easy to operate. If one fails or starts misbehaving, just respawn it, don’t waste your time trying to fix or debug it. In an ideal world, they should all be stateless.

- Vertical scaling is a no go. Choose the size of your instances based on the performance you want a single request to have, but always spread multiple requests horizontally. This pattern will help a lot with availability as well.

- Don’t be ‘legacy’: the world around you is moving very fast, and just looking at it makes no sense. New releases of software packages usually improve their performance and efficienty, and new versions of the services your cloud provider is offering you usually improve a number of items, cost being usually the main one. Running a legacy instance type just because your platform is too hard to upgrade to a newer operating system makes no sense and will kill your business in the long term.

Here we are. Now go and build!