Don’t buy servers.

No, please don’t. Not even for personal use.

Let me start from the beginning: during my relocation last year, I left my desktop computer behind. It hadn’t been my primary machine for a while and I was probably powering it on only once a month, but it was still my core repository for backups and long term storage.

As I went 100% cloud years ago (no USB drives, no external HDDs, etc) my “current” dataset is now online, synchronised with my laptop(s). Still, there are some hundreds of GBs of “cold” (as in: I will probably never need them again) pictures/docs/archives that I want to be able to access, even remotely, at any time. After exploring some mid-range NAS solutions, I ended up realising that despite having a reliable internet connection, my flat was not the best location for hosting it, so started looking around for a decent colocation space.

It didn’t take much time to figure out that space and power in a datacenter are so expensive that a NAS isn’t suitable nor effective for this purpose.

As a consequence…

MY-ZA is an HP DL320e Gen8 server, equipped with an E3-1240 v2 CPU, 32 GB of RAM, and 2×250 GB SSD + 4x1TB SATA drives. Dual PSU, P420i hardware RAID controller, iLO4, etc… …yes: a real server.

I’m sure you’re now wondering what the hell I am doing here. The answer is easy, and anybody with an engineering mindset can probably confirm: sometimes we need to spend time and energy in experiments even if we know they will fail, because what we want to figure out is how exactly they will fail.

To be honest, even if I knew this choice was sub-optimal at the very least, I was like: “Hey, what could go wrong? It’s just a server”.

Well, now I know the answer: anything – (and if you cross this with Murphy’s law…).

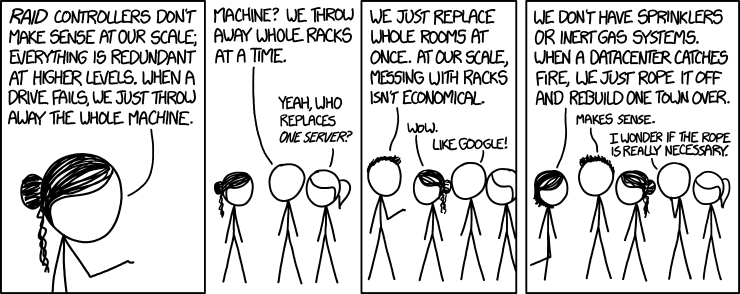

My background is in traditional IT, but looks like I quickly forgot about the pain of having to deal with bare metal. To make sure this doesn’t happen again, here’s a quick reminder that might also help you all:

- Servers are expensive: this is a $2800 machine (I’ve paid roughly 50% of that), that will cost around 70/80$ per month just by colocation and bandwidth. Moreover, in 2 years time it will be obsolete.

- Bare metal servers are… …heavy: arranging shipping back and forth costs time. And money, of course.

- They’re slow, reaaaaaaalllllly slooooooww. This thing wastes 10+ minutes just to get to the operating system boot. Don’t forget this if you’re doing something that requires a lot of reboots (like trying different RAID configurations, updating a newly installed Windows, etc). We’re now in an era where the boot time of an instance is shorter than what it takes to you, slow and inefficient human, to copy and paste connection details in your SSH client.

- What about the risk? Well, it’s huge. I have onsite support, but no spare parts. So, should something bad happen, the downtime will be counted in hours, at least.

- They don’t scale. This “thing” has already reached the maximum amount of RAM it can hold. What if I need more? I have two options, double the colocation space (and thus cost) and buy a similar second server, or buy a larger one to replace it and begin a slow, complex and painful migration.

- Agility? What? – You must manage it as you would do with a pet. If something breaks, repair it, if the OS is out of date, upgrade it. Well, in a world where if an instance is broken you immediately spin up a new one, having to fix an OS doesn’t seem appropriate.

- SSDs do have a well defined lifespan. This is not something you care about if you’re using a cloud hosting service, but here you should keep it in mind, as they will eventually die. Both at the same time, as their load will be similar.

After having spent the last 7 days (evenings to be fair, as I have a job during the day) on this project, I think I have definitely debunked the theory about cloud not being effective for personal workloads.

Project failed, time to terminat…

…no, wait, you can’t terminate a bare metal server: it’s an investment, it’s a long term decision, you can’t just roll back as you would do with a cloud instance.

Oh, God.

* don’t even try to understand my host naming convention. There are no standards, names are just random letters. Servers are cattle, not pets, right?